In this scan, the Rathenau Instituut describes a technology that is still in development, in order to guide its further development and introduction in society from a public values perspective. It focuses on immersive technologies and shows in which social domains these technologies are already being applied (experimentally), and analyses the risks through the lens of public values. The scan also includes an analysis of relevant policies and an overview of options for action to mitigate the risks identified.

The scan is the result of a short-term study based on the Rathenau Instituut's knowledge base, supplemented by a literature review, working sessions, and interviews with researchers and experts. It aims to inform policymakers and politicians about immersive technologies. The scan was developed at the request of the Ministry of the Interior and Kingdom Relations.

Under the umbrella term ‘immersive technologies’ we include a collection of technologies that immerse users in fully virtual worlds or in a hybrid mix between physical and digital worlds. The two main technologies that make this possible are augmented reality (AR) and virtual reality (VR). In AR, a user sees a virtual layer over the physical world; in VR, the user enters a fully virtual environment.

With immersive technologies, it becomes possible to experience a new kind of "realness" that can also be considered reality, even if the experience is fully or partially virtual. The technology literally comes a lot closer to the skin and to the senses than smartphones or computers.

The impact of immersive technologies on society is strongly related to a large-scale consumer breakthrough. We do not know if that breakthrough will happen, or when.

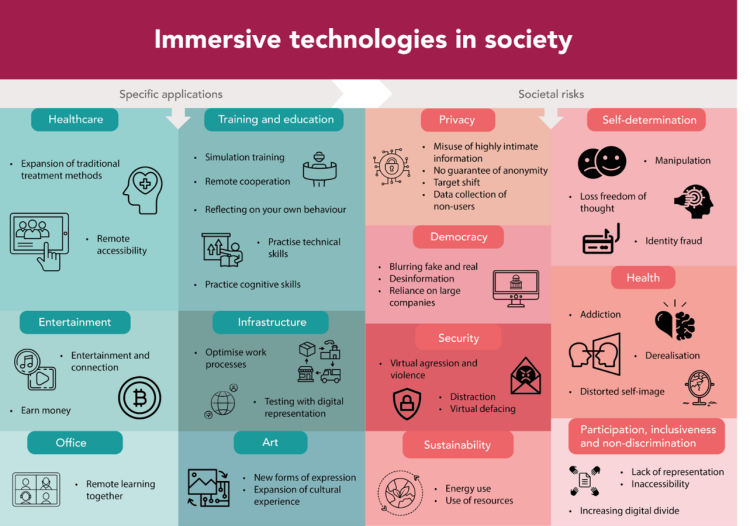

We do already see concrete applications of immersive technologies in several domains, and promises and investments aimed at developing certain applications. The domains in which we see the most experiments and applications are healthcare, training and education, entertainment, infrastructure, industry, office and art.

In this scan, the Rathenau Instituut identifies several risks involved in the further development and possibly widespread adoption of immersive technologies. When accompanied by the large-scale collection of physical and behavioral data by companies, immersive technologies can have a major impact on privacy, self-determination, democracy, and security. Furthermore, the far-reaching digitisation of society, of which immersive technologies are a part, also carries more generic risks that have an impact on inclusivity, participation and non-discrimination, and possibly sustainability.

The policies surrounding immersive technologies are in a state of flux. We discuss a selection of European laws aimed at managing risks associated with immersive technologies. These include the General Data Protection Regulation, the Artificial Intelligence Act, and the Digital Services Act. In combination, these laws can limit the opportunities for influence and manipulation based on physical and behavioral data collected in XR. At the same time, there are also a number of policy gaps and ambiguities. For example, while all kinds of physical and behavioral data may be collected by XR providers if users consent, potentially very sensitive information can be derived from it. These policies do not fully cover the risk of 'doelverschuiving' (purpose shifting): information collected in one place can be used in another place, against the interests of the users. There is also uncertainty about the protection of neurodata.

Incentives are in place to create opportunities for Dutch and European businesses in the XR market. We discuss the investment from the Groeifonds for the Creative Industries Immersive Impact Coalition (CIIIC), the European Initiative on Virtual Worlds, and the Digital Markets Act. However, with investment in immersive technologies and wider adoption of these technologies in society, the risks also become more plausible.

We formulate a number of options for action for politicians and policymakers to mitigate the risks of immersive technologies. However, there are a number of inherent risks in these technologies that will remain when they are widely adopted. This has to do with the intimate data being collected. Once this data is available, it may be used for other purposes against the public interest.

Politicians will have to make a choice about some fundamental issues: where can immersive technologies help perpetuate and actualize public values (e.g., in therapeutic applications that have demonstrable health benefits), and where should these technologies not be applied at all because they affect public values too much (e.g., large-scale adoption of data-collecting XR devices in schools)? And are there certain types of data, such as neurodata and pupillary reflexes, that should not be collected at all because they tell so much about us and abuse is a realistic scenario? And to what extent is further hyper-personalization desirable in public spaces, or should certain domains remain XR-free?

Because immersive technologies have not yet seen a break-through on a large scale, policymakers and politicians have the opportunity to adjust the development and adoption of these technologies. Thus, the challenge for policymakers and politicians is to determine the ways in which the government wants to adjust the innovation dynamics surrounding immersive technologies, based on its duty to protect citizens' rights and public values.

Researchers at the Rathenau Instituut developed a method for early identification of the public values at stake in emerging technologies, when there is still much uncertainty about its impact on society. The research enables policymakers, politicians, and other parties to anticipate new technological developments in a socially responsible way.

The Rathenau Scan 'Immersive Technologies' was developed at the request of the Ministry of the Interior and Kingdom Relations and contains options for policy.