Scrolling to the ballot box

The role of recommendation algorithms in election interference

Report

Downloads

At the request of the House of Representatives Committee on Digital Affairs, the Rathenau Instituut investigated the role that recommendation systems used by large social media platforms can play in election interference.

At the request of the House of Representatives Committee on Digital Affairs, the Rathenau Instituut investigated the role that recommendation systems used by large social media platforms can play in election interference.

We analysed academic, legal and grey literature such as journalistic sources, reports and court case reports. In addition, we interviewed representatives of a number of platforms. We also spoke to policy officers involved in elections, platform policy and interference. Furthermore, during an expert session, we consulted experts from different fields, including political communication, computer science, law and media studies.

Interference

Interference is influence by or on behalf of a foreign state actor that is contrary to the sovereignty, values or interests of the country being influenced. The actions are coercive, covert, deceptive or corrupting. Interference can pit people against each other, influence the outcome of elections and sow doubt about the democratic process.

Interference via social media platforms

We focus on a relatively new form of interference that is becoming increasingly accessible and sophisticated: interference via the recommendation systems of social media platforms.

By interference via social media platforms, we mean the orchestrated use of multiple accounts, pages or platforms by foreign state actors and/or their representatives to increase or decrease the visibility of certain content. They do this by exploiting the recommendation systems of social media platforms. The actors involved deliberately attempt to mislead the recipient of the content about the identity, motives, actions or connections of the actors involved and/or the popularity of the content. The ultimate goal is to influence public opinion or elections.

Recommendation systems

Recommendation systems sort and rank content based on data, with the aim of recommending potentially interesting content to users. Recommendation systems are a response to the problem of information overload on the internet: from all the available content, they try to show you something that you will like, find interesting or otherwise relevant.

Platforms and their designers have a preference for content that keeps users on the platform for a long time and makes them visit the platform often. Since 2016, many large platforms have switched to ranking content based on expected engagement (likes, comments, time spent, etc.). Because the more engagement, the higher the advertising revenue.

The result of recommendation systems based on engagement is that certain content can be made more visible (amplified).

The tools of interference

Incitement or manipulative content that is disseminated to influence voters is designed to elicit engagement from people, such as liking, forwarding or rewatching a video. Malicious (foreign) actors can use the opportunities offered by engagement-based recommendation systems to achieve a wide reach.

This research shows that the toolkit for interference activities on social media platforms is large and diverse, and that the possibilities for interference are becoming increasingly accessible and sophisticated. Social media platforms are constantly changing, which means that the tools are also changing. Now that social media platforms are increasingly using recommendation systems, interference activities are responding to this. For example, they use hyperactive accounts and coordinate their messages and hashtags.

Existing measures are only partially effective

The legislator has developed tools to tackle various aspects of interference. Examples include increasing the accountability of platforms, intervening in their design and increasing transparency about circulating content. Platforms also have guidelines, known as community guidelines, on what content and activities are prohibited on their platforms.

However, experts point out that simple measures, such as removing fake accounts, do not seem to be taken by platforms. Recommendation systems could also be adjusted to make them less suitable for interference activities.

Furthermore, it is difficult for independent researchers and journalists to grasp the nature, extent and effectiveness of interference via social media platforms. This is because research depends on the cooperation of the platform companies. As a result, it is unclear which content circulates relatively more and who it reaches. This makes it difficult to assess whether the measures taken by platforms are effective. Recent examples in the Netherlands and Europe show that recommendation systems offer a range of opportunities for interference activities and therefore pose a risk to online political debate.

Three perspectives for action to reduce the risk of interference

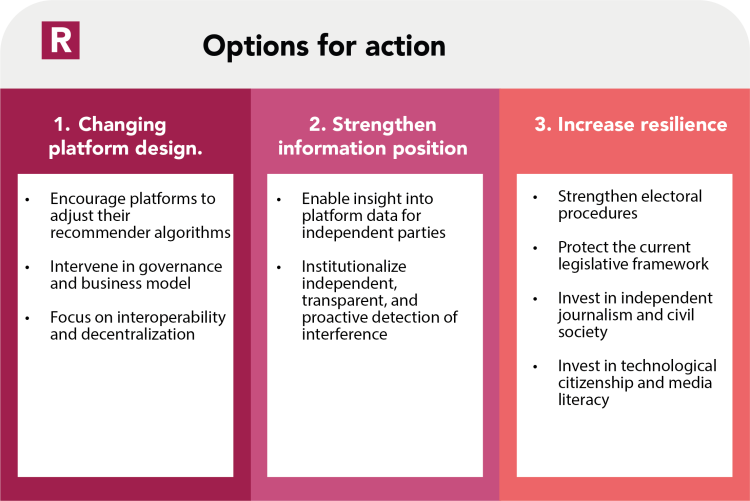

To reduce the risks of interference activities, this report provides options for action in three categories: (1) changing platform design, (2) strengthening the information position, (3) increasing resilience. A combination of these options for action is desirable.

The first category requires adjustments to recommendation systems and a focus on more fundamental changes in the landscape of social media platforms. The second category concerns strengthening the information position of supervisors, researchers, journalists, politicians and policymakers. The third category requires continued protection of electoral processes and current legislation, as well as investment in independent researchers and resilient citizens.

The three perspectives of action in text:

1. Change platform design

- a. Encourage platforms to adjust recommendation systems.

- b. Intervene in governance and revenue models.

- c. Focus on decentralisation and interoperability.

2. Strengthen information position

- a. Enable insight into platform data for government, supervisors and researchers.

- b. Institutionalise independent, transparent and proactive detection of interference.

3. Increase resilience

- a. Strengthen electoral procedures.

- b. Protect current legislation and clarify where necessary.

- c. Invest in independent journalism.

- d. Invest in technological citizenship and media literacy.

Interested in this subject?

Please download the full Dutch report for all content or sign up for an Engish spoken webinar about the outcomes of this research. (March 26th at 1PM CEST): https://www.rathenau.nl/nl/agenda/webinar-scrollend-naar-de-stembus